The Problem

1cFE is asking a specific question: what must be true for fusion energy to reach a levelized cost of electricity at or below $0.01/kWh?

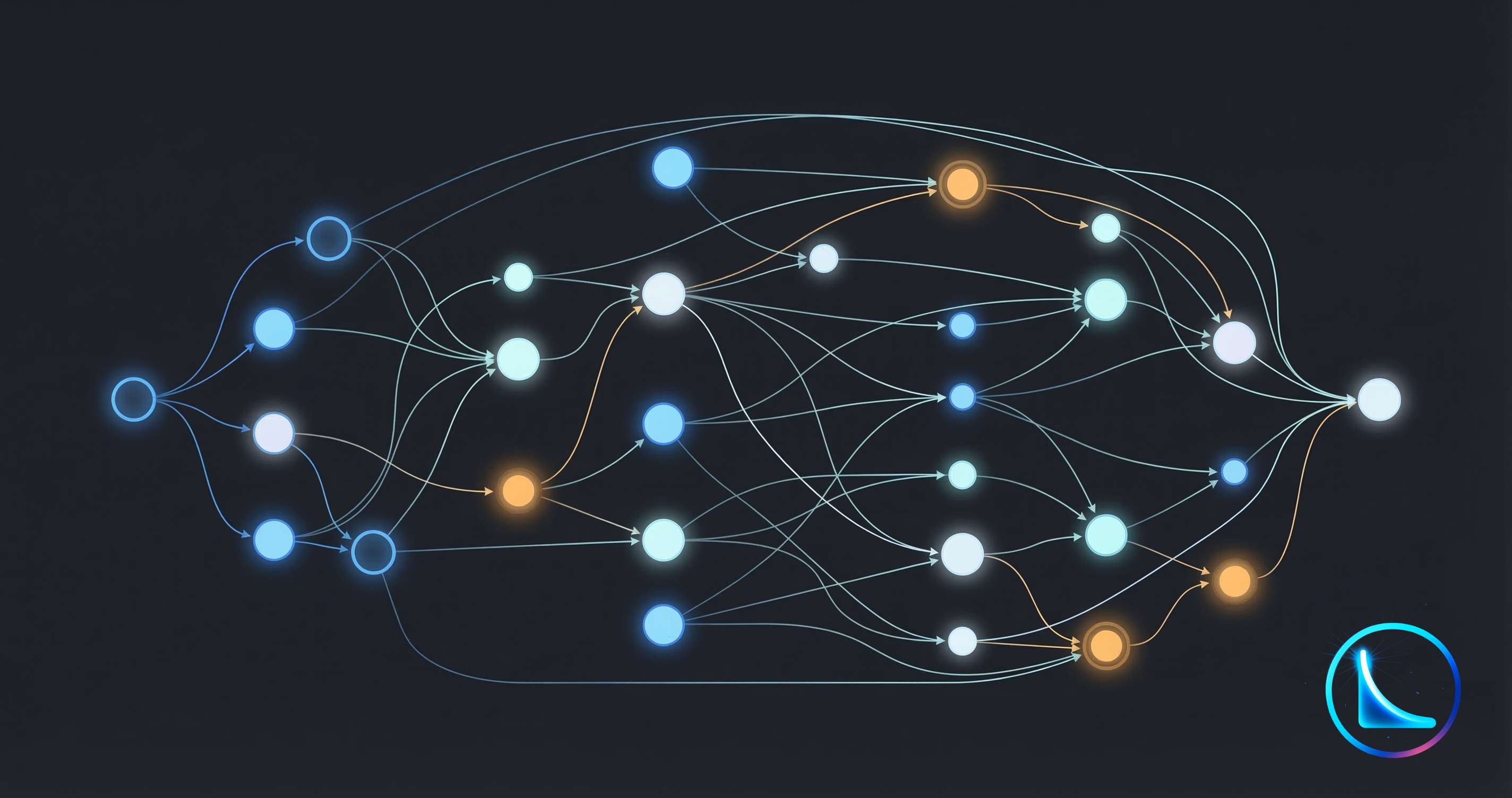

Answering that question requires searching across a large design space. Fusion has many candidate architectures, and the decision tree expands quickly. Magnetic confinement or inertial? If magnetic, what magnet topology and what materials? Magnetic confinement is better studied, but tends toward large structures and higher capital expenditure floors. Inertial confinement could be physically simpler to build, but plant economics become acutely sensitive to driver efficiency. Then there is the fuel question: deuterium-tritium (neutronic) or aneutronic fuels like p-B11, each with fundamentally different implications for shielding, materials degradation, and balance of plant.

Each branch of this tree requires meaningful work just to produce a rough estimate of the costs and challenges involved. In practice, engineering teams navigate this with whiteboard sketches, rough CAD, spreadsheets, and a lot of expert judgment. Over this process looms a tension that is familiar to anyone who has worked on ambitious hardware:

Do you lock down decisions early to keep the remaining design space manageable, and risk committing to future pain or marginal economics? Or do you keep options open and risk analysis paralysis, never pursuing any path long enough to learn something real?

For most engineering programs, this tension is managed through experience and sequential iteration. But 1cFE is not designing a fusion reactor. We are trying to map the corridors across the full design space where sub-cent economics might be achievable. That requires a different kind of search: broader, more systematic, and fast enough to be useful within a bounded timeline.

This post describes the tooling we are building to make that search possible, and the verification practices we use to avoid fooling ourselves along the way.

What We Are Not Doing

Before describing the approach, it is worth being explicit about scope.

Current AI models cannot take high-level concepts and drive them to good engineering designs. There are limitations across the engineering process that prevent meaningful AI automation of detail design today (a topic for a future post). And until you get into the thick of engineering, with prototyping, supply chain realities, and manufacturing constraints, there is an inherent limit to what analysis alone can give you.

We are not attempting to automate reactor design. What we are attempting is narrower and, we think, tractable:

Estimate long-term cost floors for different fusion approaches at a level of fidelity sufficient for corridor mapping.

Identify the assumptions that matter most: which physics parameters, materials choices, or engineering decisions most strongly determine whether a concept can reach sub-cent economics.

Derive focused development priorities: if you can say “these three things must be true for this corridor to work,” you can derive more targeted development plans than “start designing and building the full system.”

To do this across many concepts systematically, we need formalism. We need a way to represent fusion design concepts that is structured enough for automated analysis, flexible enough to capture meaningfully different architectures, and verifiable enough that we can trust the outputs.

Why SysML v2

SysML (Systems Modeling Language) has been used for decades to capture design concepts across mechanical, electrical, and software domains. Historically, it has been adopted most in aerospace and defense for later-stage systems engineering: requirements management, verification and validation. Its use in early-stage design exploration has been limited, largely because the tooling was GUI-driven, laborious, and required dedicated systems engineering expertise.

SysML v2 changes the equation in ways that matter for what we are trying to do.

First, the language itself is more flexible. Version 2 differentiates a “definition” from a “usage” (an instance of a definition), which makes trade studies intuitive. You define what a Magnet is in general terms, its parameters, interfaces, and cost structure, and then create specific usages like HTS_Magnet_A or LTS_Magnet_B with different parameter values. This maps naturally to the way we need to explore the design space: define component archetypes once, then instantiate and compare many variants.

Second, and critically for our purposes, SysML v2 supports textual notation. Previous versions were purely graphical, requiring specialized GUI tools. Textual notation means models can be written, read, and diffed like source code. This unlocks the entire ecosystem of modern software tooling:

Git. Version control, branching, pull requests, and collaborative review. Hardware teams have long envied what software teams take for granted here. With textual models, we get it.

AI coding tools. Code generation and code understanding are the strongest current AI capabilities. By representing system models as text, we can leverage these capabilities directly. This is not a speculative bet; coding agents are the most mature AI application today, and textual SysML lets us build on that foundation.

We are using SysIDE from Sensmetry to parse textual models into navigable ASTs, which gives us programmatic access to model structure for both analysis and verification.

A note on expertise: the 1cFE team includes people with experience in complex hardware-software systems and in writing software, but we are not formally trained systems engineers. We have been captured by the potential of SysML v2 for this kind of systematic exploration. We expect to get things wrong, and we are actively seeking feedback from the systems engineering community on how to do this well.

The Verification Stack

Using AI to generate or modify system models creates an obvious problem: how do you know the output is any good?

LLMs are stochastic. You can ask the same question twice and, with minor changes to context, get meaningfully different answers. If you let an agent run in loops performing unstructured research, you accumulate vast amounts of text that is redundant and difficult to make sense of. Asking AI to “come up with design concepts” without structure and verification will not produce reliable outputs.

For software, this problem is addressed with tests: unit tests, integration tests, type checkers, linters. A well-tested codebase gives an AI coding agent immediate feedback on whether its changes broke something. We need the equivalent for system models.

To that end, we have built a six-level hierarchy of automated model validation. Each level catches a different class of error, and together they provide a reasonable (though not complete) check on model quality.

- Level 1: Syntax. Does the SysML parse correctly? This is the equivalent of “does it compile.” If the model has syntax errors, nothing downstream matters.

- Level 2: Structure. Are there unused definitions, unbound calculation inputs, or incomplete bindings? Orphaned components often indicate that the model is incomplete or that an agent created something redundant. Inputs bound to undefined attributes indicate broken data flow.

- Level 3: Dataflow. Are there circular imports between packages? Circular dependencies prevent deterministic model loading and indicate architectural problems in how packages reference each other.

- Level 4: Constraints. What fraction of elements and signals have physical constraints? If too many parameters are unconstrained, the model is too theoretical to produce meaningful cost or performance estimates. We track constraint coverage metrics; automated threshold enforcement is on our roadmap.

- Level 5: Traceability. Are sources documented in the models? Every parameter value and assumption should trace to a source: a paper, a dataset, a domain expert’s input, or an explicit assumption. We currently check documentation coverage; automated source citation verification is planned. This is not just good practice; it is essential for the 1cFE mission, where we need reviewers and collaborators to be able to check our work.

- Level 6: Architecture. Our method of model execution (detailed in a future post on the TEA pipeline) places additional structural requirements on how models are organized. This level verifies that calculation definitions are in the right places, that expressions follow supported patterns, that design parameter values are extractable, and that models conform to the conventions needed for automated cost and performance analysis. This is the layer where application-specific rules live.

These six levels are implemented as automated checks that run after every model modification. They serve the same function as a CI/CD pipeline in software: they create a fast feedback loop that catches errors early and gives both humans and AI agents clear signals about model quality.

The verification stack is not a complete guarantee of correctness. A model can pass all six levels and still contain wrong parameter values or flawed assumptions. But it raises the floor substantially. And for the corridor mapping exercise, where we need to process many concepts at a pace that makes the search feasible, automated quality checks are not optional.

The Agentic Workflow

With a formalism (SysML v2) and a verification mechanism (the six-level stack), the remaining challenge is the workflow: how do you actually use AI agents to accelerate the process of building and analyzing system models?

Our approach borrows directly from effective practices in agentic software development. In software, a useful rule of thumb is that if your codebase is easy for a new human contributor to start working in, AI agents will perform well too. The same principle applies to model repositories.

Foundation: Making the Repository AI-Friendly

We organize the model repository to create structure and symmetry that both humans and agents can navigate.

A library/ package contains generic, reusable component definitions: magnets, blankets, plasma systems, power conversion, materials. These are the building blocks, defined once with their parameters, interfaces, and cost structures.

A designs/ package contains specific concept implementations that instantiate and configure library definitions. Each design represents a particular fusion architecture with its own parameter values and configuration choices.

Within both packages, we enforce consistent patterns for how components are described. Every component has organized sections for capital cost calculations, physics parameters, and operating cost estimates. This consistency means that once an agent (or a human) learns how one component works, the pattern transfers to every other component.

The repos are public:

- github.com/1cFE/agentic-mbse – the agentic modeling framework

- github.com/1cFE/sysml-codegen – SysML code generation tooling

- github.com/1cFE/fusion-tea – the technoeconomic analysis engine

Workflow: From Research to Models

For agentic performance, context is everything. The term “context engineering” has emerged in the AI development community because it points to what actually determines output quality: providing the model with all the critical data, and not too much more, along with a clear task.

Agents go off track when they try to answer a question or take an action without the right information already loaded into context. Or when they index on misleading data. The best defense, in both software and system modeling, is a structured process that ensures the right context is available at each step.

Our modeling workflow follows this sequence:

- Build up sources. We collect and index raw data from research papers, existing modeling frameworks, domain expert inputs, and simulation results. Sources are then processed (e.g. PDF extraction), stored, and indexed for agentic search.

- Research & Backlog. Any meaningful chunk of work starts with asking a question, e.g. “how should we model the blanket for this MFE concept?” Agents search across data sources to retrieve relevant information, invoke subagents, and synthesize relevant information. We then collaborate on the findings to build a strategy for implementation, formalizing into a backlog of work.

- Build the Models. Large chunks of work (”epics”) are broken down into work items like features in software. We use a multi-step process to execute these work items, where each provides visibility to the user for steering. These steps are

- spec: define scope and requirements

- design: identify all points and surfaces of changes

- plan: sequence of changes with strict to-do items (like invoking the verification stack)

- implement: execute the plan

Because pre-trained LLMs have not seen a large corpus of well-written SysML v2 models, we take additional steps to reinforce quality. A dedicated SysML v2 specification agent has access to the full language spec and answers questions about modeling techniques and reviews designs against the specification. Automated review agents run at the conclusion of design and implementation to check models against our established patterns.

We are using Claude Code as the agentic backbone for this process. It provides a practical and cost-efficient way to prototype and refine the pipeline while keeping the human in the loop at every decision point.

A Worked Example

Check out this interactive demo. We provide a deeper dive into the methodology, and showcase the workflow for a first pass modeling IFE economics.

https://1cfe.github.io/fusion-tea/demo/index.html

Relationship to Existing Work

Our pipeline is organized around a specific question: what must be true for any fusion concept to cost less than $0.01/kWh? That question shapes every architectural choice — concept-agnostic ingestion, uncertainty propagation, and inverse solves from a cost target backward to required parameter ranges.

Good tools exist in the fusion sector for related but differently-scoped purposes. PyFECONS, developed by Woodruff Scientific under an ARPA-E contract, provides structured cost accounts and a parameterized approach to estimating the cost of specific fusion plant designs — the most methodical open-source framework for that question available today. PROCESS, developed at UKAEA, is a well-established systems code for tokamak parametric design. Both are serious tools doing what they were designed to do well. We use PyFECONS as a validation reference: when we model a concept it also covers, we compare results.

There is also a body of published TEA papers examining specific concepts or concept families. These are valuable references and we draw on them, but they are static: fixed assumptions, fixed concept, not structured for reuse or cross-concept comparison.

None of this is what we are building. The sub-cent question requires searching across the full space of fusion approaches under a consistent analytical framework, with uncertainty made explicit and cost targets driving the analysis rather than following from it.

What We Cannot Do Yet

This is early work, and there are significant challenges ahead.

User experience and oversight. Human judgment must drive the direction of exploration, resolve modeling conflicts, and audit the decisions agents make. Beyond that, for the models to be genuinely useful, users need to view, inspect, and interact with them in ways that match how they think. Building those surfaces is a major design challenge we have not solved.

Data management. Through the 1cFE project we are collecting data from many sources: historical and new research papers, existing modeling frameworks, insights from conversations with domain experts, and new analysis results across fusion methods. Managing this data, prioritizing and weighting different sources, handling the evolution of data and the resulting models: all of this creates requirements for traceability and versioning that we are still building out.

Model execution. Formal system models are only useful for our purposes if we can simulate them: estimating capital costs, performing energy balances, calculating fuel and operating costs, and ultimately computing levelized cost of electricity. We will discuss our solution — sysml-codegen pipeline — in a future post.

SysML v2 modeling extensions. Some physical concepts are difficult to represent cleanly. Geometry, for instance: how do you represent basic shapes, sizes, and spatial relationships in a way that lets you check whether components physically fit, without going all the way to CAD? Process modeling, especially stateful processes, also introduces complexity. These are areas where we need to push the modeling language.

Help Us Get This Right

We are publishing this work early because we would rather get corrections now than discover blind spots later.

To systems engineers: what are your techniques for maximizing model quality and utility? If you could automate patterns and checks for your team, what would they be? We know the devil is in the details of SysML v2 modeling, and we need your input.

To design engineers working on novel hardware concepts: what would you want from the user experience? How would you want to interact with models like these to get useful information out of them?

To the fusion community: we are mapping the sub-cent design space and publishing our work. If you see something wrong, tell us. That is the point.

The repos are public at github.com/1cFE. The next post in this series will cover the technoeconomic analysis pipeline: how we go from formal system models to cost estimates.